The brightest spots are areas that reflect or emit a lot of that wavelength of light, and darker areas reflect or emit little-if any. Viewing the output from just one band is a bit like looking at the world in shades of gray. Satellite instruments carry many sensors, each tuned to a narrow range, or “band,” of wavelengths. The colder an object is, the longer the peak wavelength it emits. At about 400☌ (750° F)-the temperature of an electric stove burner set to high-the emitted light will begin to be visible. The hotter an object is, the shorter the peak wavelength it emits. Everything gives off energy, usually in the form of heat (thermal infrared radiation).

Some satellite instruments also directly measure the energy emitted by objects. Scientists call these “atmospheric windows” for specific wavelengths, and often satellite sensors are tuned to measure light through these windows. Gases also let a few wavelengths pass through unimpeded. Like Earth’s surfaces, gases in the atmosphere also have unique spectral signatures, absorbing some wavelengths of electromagnetic radiation and emitting others. This unique absorption and reflection pattern is called a spectral signature. Chlorophyll in plants, for example, absorbs red and blue light but reflects green and infrared this is why leaves appear green. Every surface or object absorbs, emits and reflects light uniquely depending on its chemical makeup. When sunlight reaches Earth, the energy is absorbed, transmitted or reflected. Most of the electromagnetic radiation that matters for Earth-observing satellites comes from the sun. At right Southeast Florida is shown in NIR, red and green light. The false-color image in the middle combines SWIR, NIR and green light. The natural-color image at left shows southeast Florida in red, green and blue light. Infrared light and radio waves have longer wavelengths and lower energy than visible light, while ultraviolet light, X-rays and gamma rays have shorter wavelengths and higher energy. Visible light comes in wavelengths of 400 to 700 nanometers, with violet having the shortest wavelengths and red having the longest. The distance between the top of each wave-the wavelength-is smaller for high-energy waves and longer for low-energy waves. All light travels at the same speed, but the waves aren’t all the same. Light is a form of energy-also known as electromagnetic radiation-that travels in waves. So what does a satellite imager measure to produce an image? It measures light we see and don’t see. It all depends on the process used to transform satellite measurements into images. But data also can become photo-like natural-color images or false-color images. These observations can be turned into data-based maps that measure everything from plant growth or cloudiness. But the majority of instruments are passive that is, they record light reflected or emitted by Earth’s surface. Some methods are active, bouncing light or radio waves off Earth and measuring the energy returned light detection and ranging (LiDAR) and radar technology are good examples. In fact, remote sensing scientists and engineers are endlessly creative about what they can measure from space, developing satellites with a wide variety of tools to tease information out of our planet.

Some of it is visual, some of it is chemical and some of it is physical.

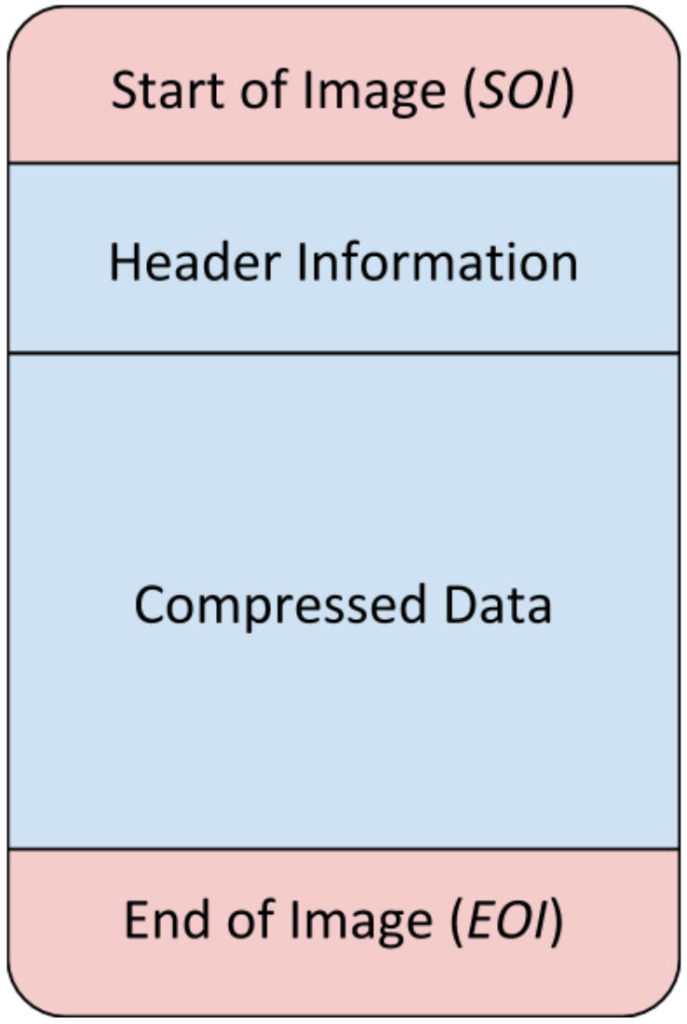

Satellite instruments gather an array of information about Earth. To understand what they mean, it’s necessary to understand exactly what a satellite image is. Because satellites collect information beyond what human eyes can see, images made from other wavelengths of light look unnatural to us. In reality, a red forest is just as real as a dark green one. “That forest is red,” we think, “so the image can’t possibly be real.” We also have that bias when we look at satellite images that don’t represent the Earth’s surface as we see it. Why does the difference matter? When we see a photo where the colors are brightened or altered, we think of it as artful (at best) or manipulated (at worst). A satellite image is created by combining measurements of the intensity of certain wavelengths of light that are visible and invisible to human eyes. A photograph is made when light is focused and captured on a light-sensitive surface such as film or a charge-coupled device in a digital camera. Though they may look similar, photographs and satellite images are fundamentally different. In our photo-saturated world, it’s natural to think of the images on NASA’s Earth Observatory website as snapshots from space. Natural- and false-color images from NASA’s MESSENGER mission to Mercury show plant-covered land from the Amazon rainforest to North American forests.